As we look to move to a microservice environment at $job, several requirements such as mutual TLS between services, distributed tracing, and the lockdown of traffic came about. A service mesh provides a solution for securing and monitoring traffic as it flows through the cluster. After discussing with several folks who have implemented or use service meshes, it came down to using Consul Connect or installing Istio.

Consul Connect

An internal team uses consul for their testing environment, so going in there was a level of expertise within the organization. Consul would plug right into our current build workflow, as it utilizes Helm to deploy. Once deployed, the envoy sidecar will exist within a pod to proxy traffic between pods.

Deployment

For the helm chart I used the following value overrides:

global:

name: consul

datacenter: dc1

# Enable all services to run on TLS

tls:

enabled: true

# Sync services from k8s to consul (more complete picture)

syncCatalog:

enabled: true

# Default Inject Sidecar proxy into all containers

connectInject:

enabled: true

default: true

centralConfig:

enabled: true

client:

enabled: true

# Highly available stack that enables telemetry

server:

replicas: 3

bootstrapExpect: 3

storageClass: nfs-client

extraConfig: |

{

"telemetry": {

"prometheus_retention_time": "24h",

"disable_hostname": true

}

}

affinity: |

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchLabels:

app: {{ template "consul.name" . }}

release: "{{ .Release.Name }}"

component: server

topologyKey: kubernetes.io/hostname

Test Application

For a test app, I utilized Consul counting + dashboard service. The general notion is the dashboard app communicates with the counting app to get a number to display. To allow envoy to proxy to other services, annotations are utilized on the pod. For this app, by adding the below annotation, it will proxy all localhost:9001 calls over mutual TLS:

"consul.hashicorp.com/connect-service-upstreams": "counting:9001"

Analyzing Traffic Rules

Consul utilizes intentions to define routing rules. One of the tests I did was disabling traffic from the dashboard to the counting service. When annotated this traffic became denied, and the resulting display occurred.

However, one thing I discovered was this restriction could be bypassed by changing the URL from locahost:9001 to counting:9001, which would utilize kubedns for service discovery, and then route the traffic.

Distributed Tracing

After reading this blog post, I wanted to implement distributed tracing into the environment. The Hashicorp example, made it seem extremely simple to implement, however, I ran into several hurdles:

- Proxy escaping characters

- Helm chart doesn't natively support, so had to deploy zipkin

- Found a quote from logz.io, that made me pivot away from zipkin to jaeger:

If Kubernetes is running in production, then adopt Jaeger. If there’s no existing container infrastructure than Zipkin, it makes for a better fit because there are fewer moving pieces.

Istio

Initially going with Istio, the only thing I've ever heard about it is summed in Ivan Pedrazas's Tweet:

"Daddy, tell me a horror story"

"SSL with Istio and Kubernetes"

"Is it as bad as the NFS monster one?"

"Oh no, nothing is worse than the NFS monster"

However, I wanted to keep an open mind and had a discussion with IBM's JJ Asghar and a mentor Drew Mullen. Through discussion, I learned how the horror stories of Istio have vastly been improved recently, with a simplified control plane. This was enough to start exploring how to incorporate it within our environment and perform the same analysis as the Consul Connect test.

Deployment

As of 1.5 Istio deprecated the use of Helm in replacement for their own CLI tool, which culminates many individual charts. This will call for some rework of our existing pipeline, but the tool itself is fairly simple. Utilizing profiles, you can determine which components you want to be installed.

| default | demo | minimal | remote | |

|---|---|---|---|---|

| Core components | ||||

| istio-egressgateway | X | |||

| istio-ingressgateway | X | X | ||

| istiod | X | X | X | |

| Addons | ||||

| grafana | X | |||

| istio-tracing | X | |||

| kiali | X | |||

| prometheus | X | X | X |

For this use case, I wanted the minimalize footprint, but still wanted to add some additional components. Luckily, istioctl allows for that, with the default profile. Since we have a few overrides, well create a config file:

# install.yml

apiVersion: install.istio.io/v1alpha1

kind: IstioOperator

spec:

values:

tracing:

enabled: true

kiali:

enabled: true

istioctl install --set profile=default -f install.yml

Once Installed creating a namespace with the label istio-injection: enabled, or through the pod annotation: sidecar.istio.io/inject: "true" will ensure all pods get the envoy sidecar.

Analyzing Traffic Rules

With the envoy proxy sidecar enabled, mTLS is enabled for free. However, there are additional Custom Resource Definitions (CRDs) that you can define to enforce your environment is secure.

Mutual TLS

To enforce mutual TLS utilizing the CRD's PeerAuthentication and DestinationRule should be defined. The below configuration requires all communication to in a given $NAMESPACE to use mTLS, while enforcing the app: dashboard-counter use it.

---

apiVersion: "security.istio.io/v1beta1"

kind: "PeerAuthentication"

metadata:

name: "require-mtls-dashboard"

namespace: istio-system

spec:

selector:

matchLabels:

app: dashboard-counter

mtls:

# Several modes available (STRICT, PERMISSIVE, UNSET)

mode: STRICT

---

apiVersion: "networking.istio.io/v1alpha3"

kind: "DestinationRule"

metadata:

name: "default"

spec:

host: "*.$NAMESPACE.svc.cluster.local"

trafficPolicy:

tls:

mode: ISTIO_MUTUAL

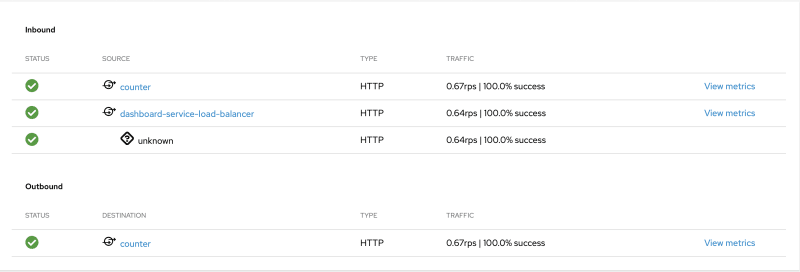

Kiali provides a nice visualization of this all, whereas you see the entire communication from the unmarked ingress to the counter service going over TLS.

Authorization

If you analyze various sources for best practices, one of the recommended to implement is to have a default deny-all. This practice is performed through Authorization Policies:

apiVersion: security.istio.io/v1beta1

kind: AuthorizationPolicy

metadata:

name: deny-all

namespace: $NAMESPACE

spec:

{}

Once a deny all is in place, all requests will receive an RBAC: access denied notification. Then you can define another CRD to allow actions for communication.

apiVersion: security.istio.io/v1beta1

kind: AuthorizationPolicy

metadata:

name: allow-method-get

spec:

selector:

matchLabels:

app: dashboard-counter

action: ALLOW

rules:

- {}

Distributed Tracing

With Istio, distributed tracing comes as a configuration flag. By using the default with tracing, Jaeger is installed into the cluster, and you can visualize with Kiali.

Summary

After analyzing Istio vs Consul, a lot of features I was looking for seemed to come out of the box with Istio. The benefits of using CRDs vs API calls also weighed heavily since that another auth system is not in play. With Consul, although it was nice to plugin with Helm, the bypass of intentions with service discovery was ultimately the negator.

Top comments (0)